From Toy Debates to Autonomous Engineering Teams with CrewAI

Three debaters, a sandboxed coder, a stock picker with memory, and a full engineering team that writes, tests, and reviews its own code. All running on local GPUs.

I discovered CrewAI through this Udemy course. This post walks through five levels of increasing complexity, from a toy debate to autonomous software engineering.

Level 1: The Basics (The Debate Team)

Theme: Pure Interaction | Code

The journey begins with the Debate project—the "Hello World" of agent orchestration.

Here, we have three simple agents: two Debaters and a Judge. The complexity is minimal, but the core idea matters: Role-Playing.

- The Setup: One agent proposes an argument, and the other judges it.

- The Feature: Pure prompt-based personalities. No complex prompt engineering variables required—just

role,goal, andbackstory.

# debate/config/agents.yaml

debater:

role: A compelling debater

goal: Present a clear argument...

judge:

role: Decide the winner...

Key Takeaway: With just a few lines of YAML, you can create distinct personalities without tools or memory—just pure LLM-to-LLM interaction.

Results:

Topic: There needs to be strict laws to regulate LLMs.

Proposer: gpt-oss:20b

Opponent: deepseek-r1:8b

Judge: llama3.1:8b

DeepSeek takes the win. Here is Llama's verdict:

Final Output: After carefully reviewing the arguments presented by both sides, I have come to the conclusion that the motion "There needs to be strict laws to regulate LLMs" is more convincing.

The proponents of strict regulation present a comprehensive and well-reasoned argument that highlights the numerous risks associated with unregulated LLMs. They convincingly argue that without legal oversight, malicious actors can exploit these systems to manipulate elections, incite violence, or spread pandemics of falsehoods. Furthermore, they emphasize the importance of protecting individual privacy rights by mandating transparent data sourcing, anonymization standards, and user consent.

The opponents' argument, on the other hand, relies heavily on the potential for restrictive regulations to stifle innovation and hinder technological progress. However, I believe that this argument is less compelling due to its vagueness and lack of concrete evidence. The opponents argue that the rapid evolution of LLMs outpaces legislative efforts, rendering strict laws quickly outdated. While this may be true, it does not necessarily follow that strict laws are inherently counterproductive.

In contrast, the proponents provide a detailed analysis of specific risks and propose targeted solutions to address them through legislation. They emphasize the importance of balancing innovation with protection of human rights, democratic integrity, and societal well-being. I am convinced by their argument that strict laws can create a predictable, safe, and equitable environment in which LLMs can deliver their transformative benefits without becoming sources of harm.

Furthermore, I believe that the proponents' emphasis on transparency and accountability through mandatory disclosure requirements is essential for building public trust and ensuring that end-users can scrutinize AI recommendations before accepting them. This approach aligns with the principles of democratic governance and human rights, which should be the foundation of any regulatory framework governing LLMs.

In conclusion, based on the arguments presented, I am convinced that strict laws to regulate LLMs are necessary to safeguard society, ensure accountability, and preserve democratic values. The potential benefits of regulation far outweigh the perceived risks and limitations associated with restrictive legislation.

Level 2: Safe Code Execution (The Coder)

Theme: Agency with Guardrails | Code

Next, we graduate to the Coder project. This is where things get real. An agent that just talks is fun; an agent that does things is useful.

Giving an AI unrestricted access to your terminal is terrifying. CrewAI solves this with a simple feature:

- The Setup: The

Coderagent can write and execute Python code directly. - The Feature:

code_execution_mode="safe". The codebase configures the agent to run code inside a Docker container.

# coder/crew.py

agent = Agent(

role="coder",

allow_code_execution=True,

code_execution_mode="safe", # Dockerized safety!

llm="ollama_chat/deepseek-r1:8b"

)

Key Takeaway: You can run powerful coding agents (like deepseek-r1) locally without risking your host machine.

Level 3: Connecting to the World (The Financial Researcher)

Theme: Tool Use | Code

The Financial Researcher project introduces Tools.

A smart agent is useless if it's cut off from the world. This crew is composed of a Researcher and an Analyst.

- The Setup: A workflow where the

Researchersearches the web for real-time data, and theAnalystsynthesizes that raw data into a markdown report. - The Feature:

SerperDevTool. The agent isn't just hallucinating facts anymore; it's equipped with tools for live Google searches. No more "I'm sorry, my knowledge cutoff is 2021."

Key Takeaway: This demonstrates the classic "Research & Write" pattern, perfect for automating daily briefings.

Level 4: Memory & Structure (The Stock Picker)

Theme: Advanced Cognition | Code

Now we enter the big leagues with the Stock Picker project. This introduces two advanced concepts: Memory and Structured Outputs.

- The Setup: The crew uses

LongTermMemory(SQLite) to store insights across runs andShortTermMemory(RAG) to maintain context. It uses local embeddings (nomic-embed-text) to keep everything private. - The Feature:

output_pydantic. Instead of a wall of text, agents return strictly typed Pydantic objects.

# stock_picker/crew.py

class TrendingCompany(BaseModel):

name: str

ticker: str

reason: str

@task(output_pydantic=TrendingCompanyList)

def find_trending_companies(self): ...

Key Takeaway: Structured output guarantees you can reliably pipe AI generation into a database or API because the structure is enforced.

Level 5: The Enterprise (The Engineering Team)

Theme: Orchestration & Delegation | Code

Finally, the Engineering Team project. This is the pinnacle of the experiment.

It simulates a full software development lifecycle with specialized roles: Lead, Backend Engineer, Frontend Engineer, and QA.

- The Setup: Tasks are chained contextually. The

Backend Engineerdoesn't start until theLeadfinishes the design.QAwaits for the code. - The Feature: Multi-Model Intelligence. Different models are routed to different brains (

gpt-oss:20bfor high-level design,qwen3-coder:30bfor heavy lifting).

# engineering_team/config/tasks.yaml

backend_engineer:

output_file: output/{module_name}

frontend_engineer:

output_file: output/app.py

Key Takeaway: A crew can take an abstract idea and output a fully tested, functional application with frontend and backend, saved directly to disk.

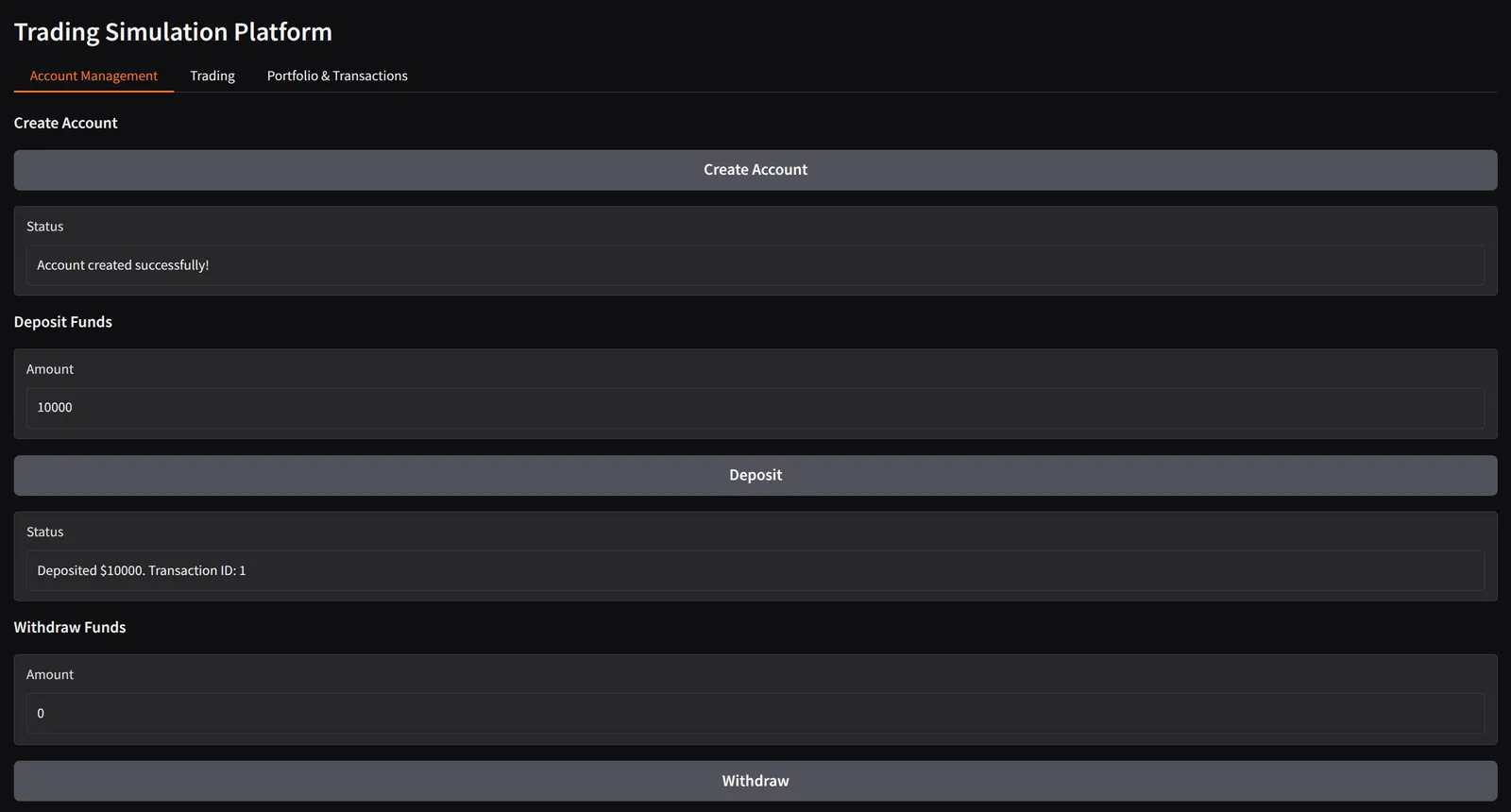

Requirements:

A simple account management system for a trading simulation platform.

The system should allow users to create an account, deposit funds, and withdraw funds.

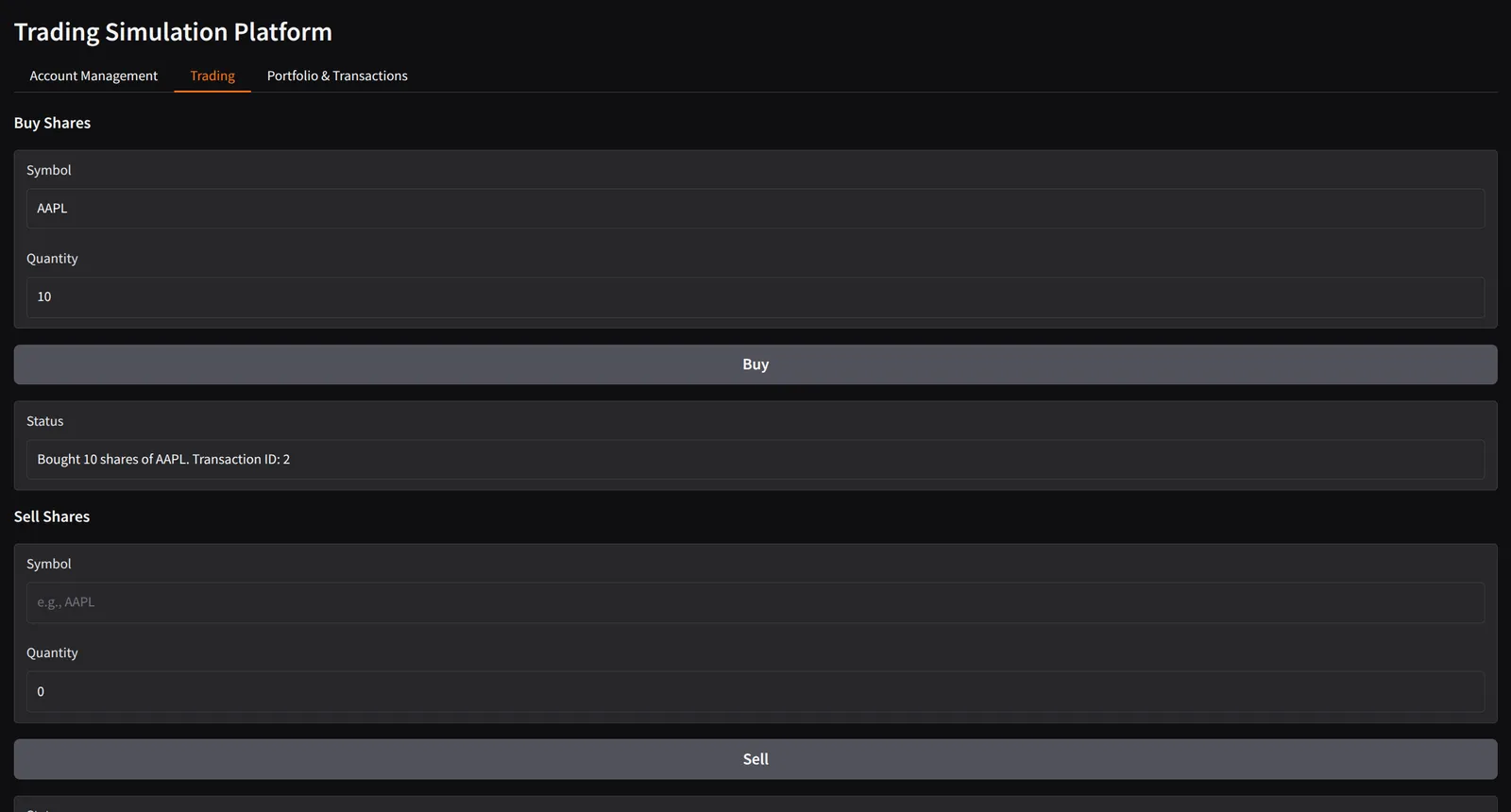

The system should allow users to record that they have bought or sold shares, providing a quantity.

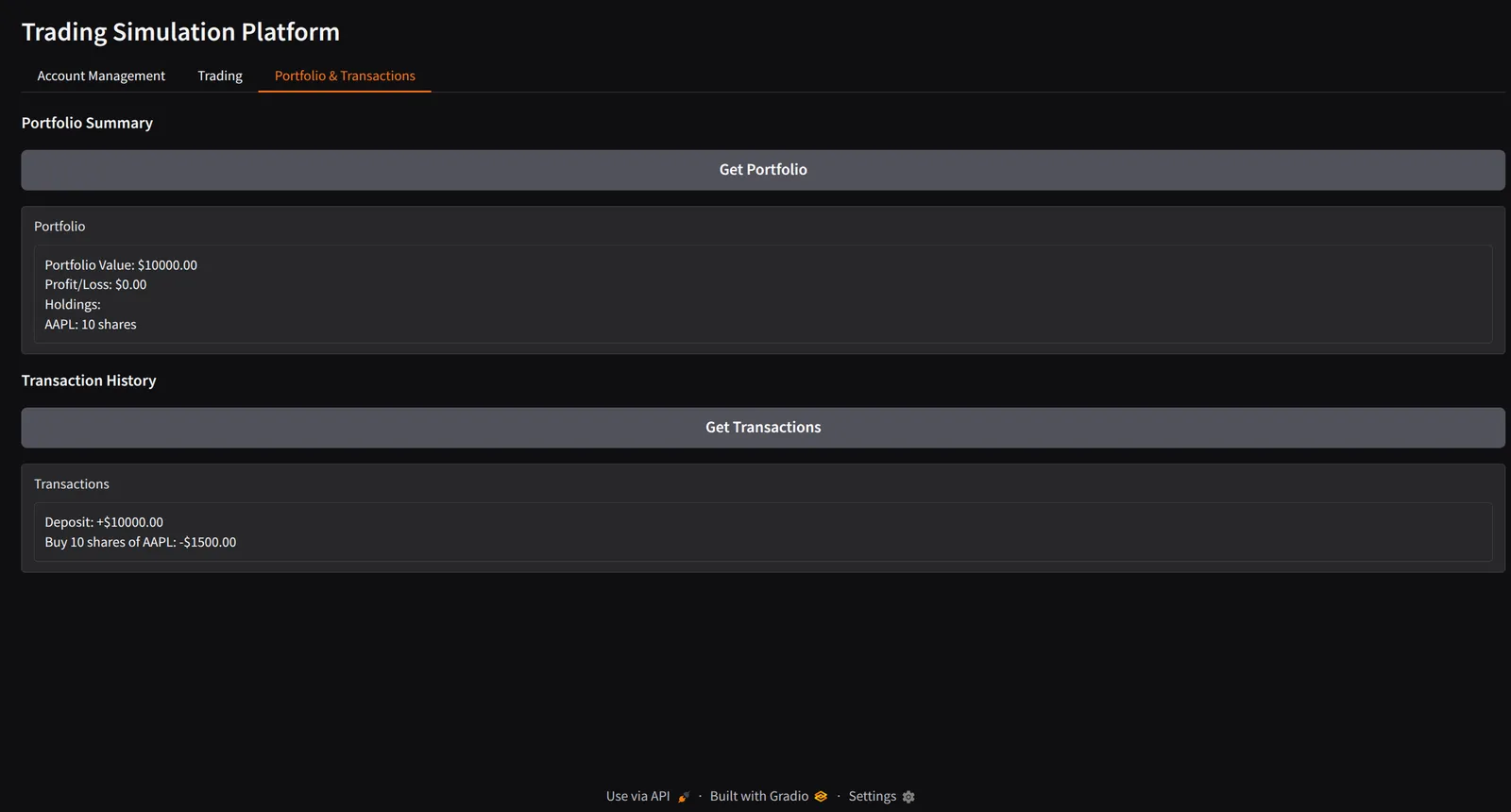

The system should calculate the total value of the user's portfolio, and the profit or loss from the initial deposit.

The system should be able to report the holdings of the user at any point in time.

The system should be able to report the profit or loss of the user at any point in time.

The system should be able to list the transactions that the user has made over time.

The system should prevent the user from withdrawing funds that would leave them with a negative balance, or

from buying more shares than they can afford, or selling shares that they don't have.

The system has access to a function get_share_price(symbol) which returns the current price of a share, and includes a test implementation that returns fixed prices for AAPL, TSLA, GOOGL.

Design here

Final Output:

Account Management

Trading

Portfolio & Transactions

The Local Advantage

What ties all these projects together? Local Dominance.

Every agent here runs on local hardware using Ollama. Whether it's the 8B parameter model for the debater or the 30B coding specialist for the engineer, the power is entirely in my hands.

This codebase proves that you don't need to choose between simplicity and power. With CrewAI, you can start with a debate and end with a software empire. (Or at least a very productive localhost.)